If your personal trainer agrees with every discomfort you report, you should probably stop training with them.

You say squats feel wrong. They remove squats.

You say your shoulders burn. They change the movement.

You say recovery feels hard. They lower the load again.

For a moment, this feels like good service.

- You feel heard.

- You feel in control.

- The session adjusts instantly around your preferences.

But if every discomfort becomes a reason to reduce demand, the body keeps moving in the wrong direction.

Strength does not build.

Your breathing stays shallow when effort begins.

The doctor will not be impressed later, even if today’s session felt easier.

Products behave exactly the same way.

They do not improve without a certain amount of demand and discipline.

I've Been Watching How Clients Use AI

They’re excited. Genuinely.

They feel like they’ve regained control. The "old way" of working suddenly feels outdated.

Tools like Lovable, Claude, GitHub Copilot, and Google Gemini all trigger roughly the same reaction.

- A prompt becomes a layout.

- A request becomes working code.

- A rough thought becomes something visible enough to react to.

That first result often feels astonishing.

And it should.

Because for the first time, many people are experiencing direct output without waiting for interpretation.

But something interesting happens after the initial excitement.

They hired us for perspective. For judgment. For decades of pattern recognition built across hundreds of client projects.

Now some of them quietly begin to rationalize AI as a way to skip that layer entirely.

Not because they no longer value expertise.

But because AI makes expertise feel temporarily unnecessary.

And honestly, in their position, I would probably feel the same at first.

Because the first thing AI removes is waiting.

And waiting is exactly what many founders dislike most when something feels urgent.

If an idea feels important, every hour between thought and visible output starts to feel unnecessary.

That is where the attraction becomes unusually strong.

The cost appears later.

The Difference Is Subtle But Expensive

I don’t believe AI can replace what hundreds of client projects slowly teach you.

Let me explain.

The moments where customers say one thing, while behavior points somewhere else.

The repeated pattern that what people ask for is often not what improves the outcome.

AI knows many of these patterns too — in theory, often at enormous scale.

In fact, one of its strongest abilities is exactly that:

- to process more signals than any single person could hold at once,

- to surface repetition inside large datasets,

- to detect tendencies that remain invisible when looked at manually.

That is where AI can become unusually valuable.

After something exists.

After users have moved.

After friction leaves traces.

But that is not where many clients begin.

They often use AI earlier — where judgment matters most.

- For layout.

- For messaging.

- For interface decisions.

For choices that still depend less on volume and more on knowing what deserves emphasis.

And there the problem changes.

Because AI still needs direction.

And direction still depends on knowing what to ask.

But plausible isn't the same as right.

It is too much to expect every founder, client, or team to suddenly carry years of interface judgment, communication experience, and user behavior insight just because AI now makes production easy.

AI can bypass your lack of tool skills.

It can't bypass your lack of pattern recognition from solving real problems with real users.

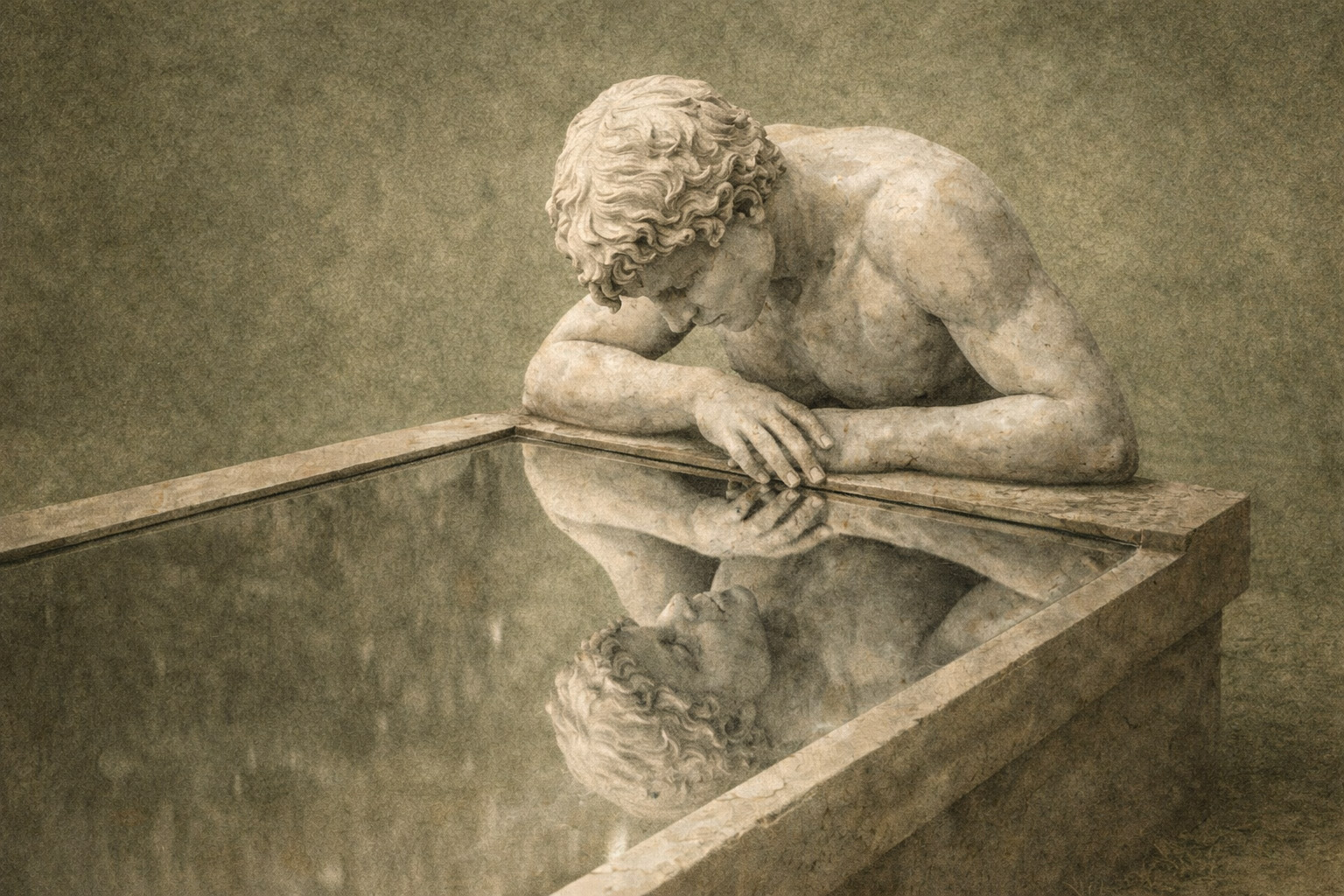

AI Wasn't Built to Argue With You

That's not a flaw. It's by design.

AI was built to help. To assist. To continue.

But that means it won't resist weak requests the way a human expert might.

A designer might say: "This looks good, but it weakens your primary signal."

AI says: "Here are three variations."

One protects you from your convincing first instinct.

The other gives you what you asked for.

What Happens When Production Becomes Cheap

For the first time, almost anyone can ask for a website, a feature layout, a product description, a visual identity — and receive an answer immediately.

Then another. Then ten more.

The system doesn't hesitate. It doesn't become impatient. It doesn't ask whether the request itself deserves resistance.

It simply continues.

That responsiveness feels like progress.

And some of it is.

AI removes friction where friction was unnecessary. It accelerates drafts. It exposes alternatives quickly.

But the same strength hides a weakness.

When production becomes cheap, restraint becomes rare.

And restraint is where quality lives.

Klarna Thought AI Could Replace Customer Service

In 2023, Klarna's CEO proudly announced that "AI can already do all of the jobs that we, as humans, do."

They stopped hiring entirely. Laid off 700 customer service workers. Replaced them with AI chatbots.

They claimed $10 million in savings.

By 2025, the CEO publicly admitted the AI-driven transition negatively affected service and product quality.

They started hiring again — but as gig workers, not permanent staff.

55% of companies that executed AI-driven layoffs now regret it.

The output was cheaper. The judgment was gone.

And customers noticed.

The Problem Isn't Catastrophic — It's Quiet

A page becomes slightly too full.

A feature stays because it can stay.

A sentence survives because no one removed it.

An animation remains because it looked impressive in isolation.

Nothing collapses immediately.

But momentum weakens.

The product starts asking for more interpretation than it should.

And users rarely explain this directly.

They simply hesitate. Then leave.

AI Often Feels Most Empowering Exactly Where Expertise Used to Protect You

I noticed this long before AI.

At Nokia, even weak decisions often arrived with convincing arguments behind them.

Later in startups, it looked the same.

The risk was rarely obvious stupidity.

It was usually a reasonable explanation attached to the wrong emphasis.

Sometimes two strong opinions collided, and I already knew what would happen:

the third option — the one neither side fully owned — would survive.

Not because it was ideal.

But because it created enough temporary agreement to move forward.

And sometimes that was still better than the weaker option being defended too confidently.

Because even imperfect mediation still introduced friction.

AI removes much of that friction.

And that is exactly why weak decisions can now settle faster than before.

I'm Not Against AI — I Use It Daily

But I treat it the way dangerous tools should be treated.

A chainsaw is powerful. Essential, even.

But no one mistakes a chainsaw for judgment.

- It does not decide which tree should fall.

- It does not understand what stands nearby.

- It does not calculate what happens when the cut is slightly wrong.

That responsibility remains with the person holding it.

AI works the same way.

It amplifies force.

It does not replace consequence.

The Work AI Accelerates Was Never the Problem

A colleague told me something that stuck:

"Writing code faster is great. But coding was never the annoying part. Keeping the codebase intact year after year — that's the hard part."

The same applies everywhere.

Branding isn't hard because creating a logo takes time.

It's hard because you have to stop the brand from chasing every new trend. Keep it disciplined. Consistent. Recognizable.

Copywriting isn't hard because writing sentences is slow.

It's hard because you have to maintain tone of voice across hundreds of touchpoints. Keep it aligned with the company's mission. Make it feel like the same voice, even when different people write it.

AI can accelerate the output.

But it can't maintain the discipline.

That's still slow work. Human work.

Where Expertise Matters Now

AI hasn't reduced the need for expertise.

It's changed where expertise matters.

Less around raw production.

More around disciplined refusal.

The valuable question isn't: "Can this be made?"

That answer is increasingly yes.

The valuable question is: "Should this remain?"

That's slower. Less exciting. And much harder to automate.

Because good structure still depends on something AI doesn't naturally provide:

Knowing when to stop before abundance starts weakening intent.